I previously looked at the NetNut proxies. This post reviews the Webshare proxy service.

Why use a proxy?

I wanted to browse hardware at The Home Depot. But I live in the UK and found I couldn’t access the site.

Akamai (the CDN serving The Home Depot’s site) is blocking my request. Evidently people in the UK buying hardware in the US is a security concern. Perhaps they are concerned about us building some potent weaponry this side of the Atlantic? Maybe they feel threatened by our tea-powered toolboxes and metric bolts? Conceivably they are worried about us cornering the market on garden sheds?

Okay, you got me. I wanted to do a bit of scraping. This might be why requests from outside the US are blocked. But I’m cunning. I’m not going to let some parochial geo-blocking get in my way.

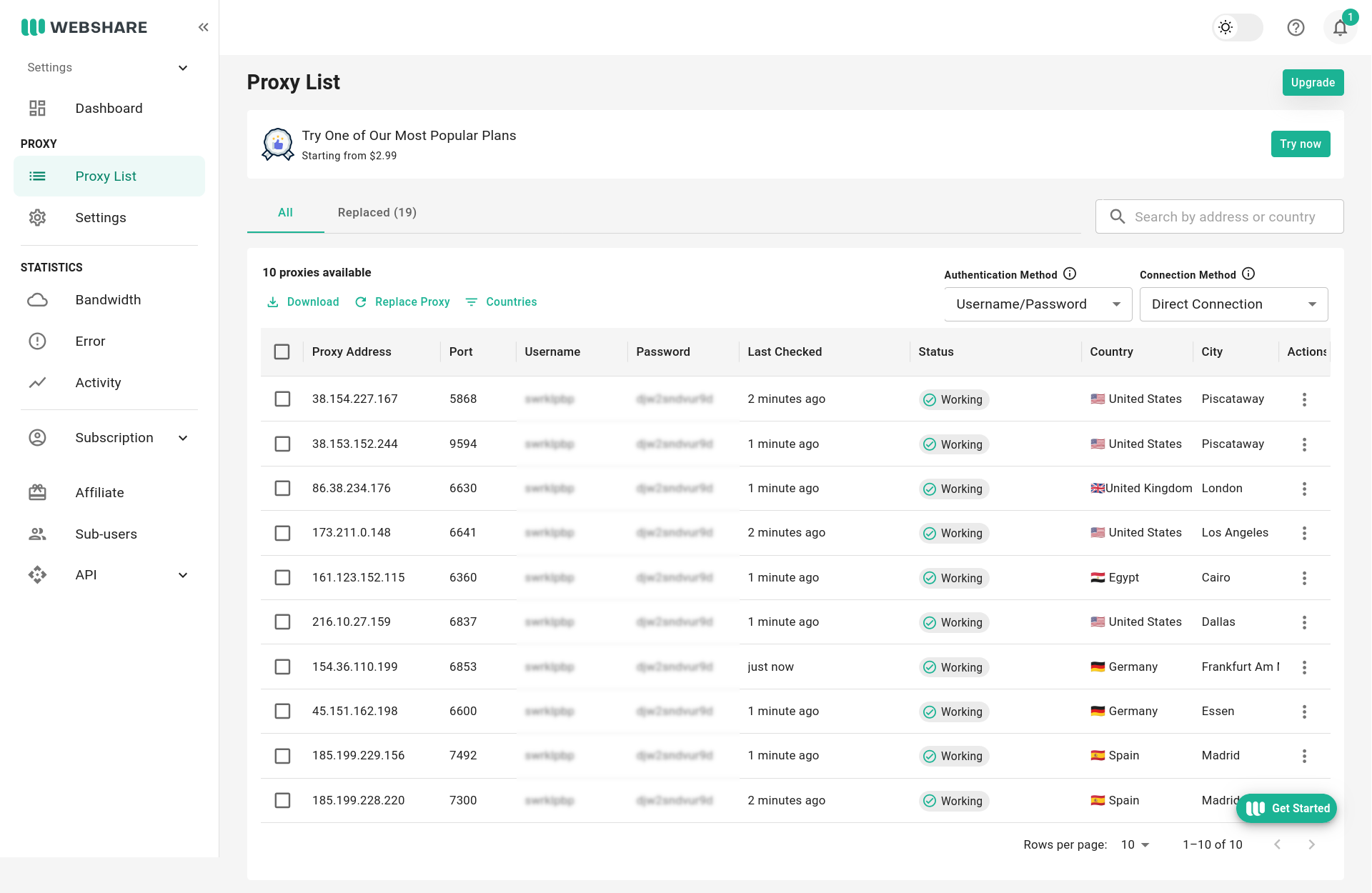

Proxy List

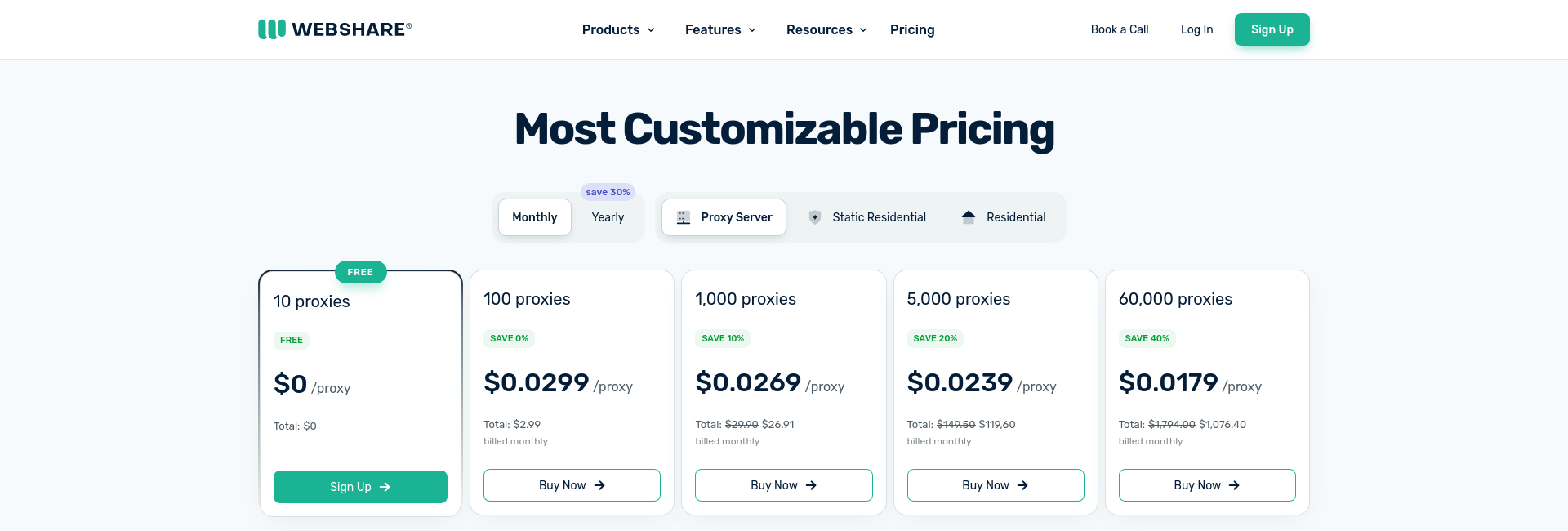

On the free plan I have access to 10 proxies. The proxy list below shows their IP addresses, ports, status and location. Even these have decent geographical distribution. The proxy list also gives the username and password used to authenticate to the proxy servers. As you’d hope, they all use the same username and password. 📢 These credentials are linked to your account. Don’t share them!

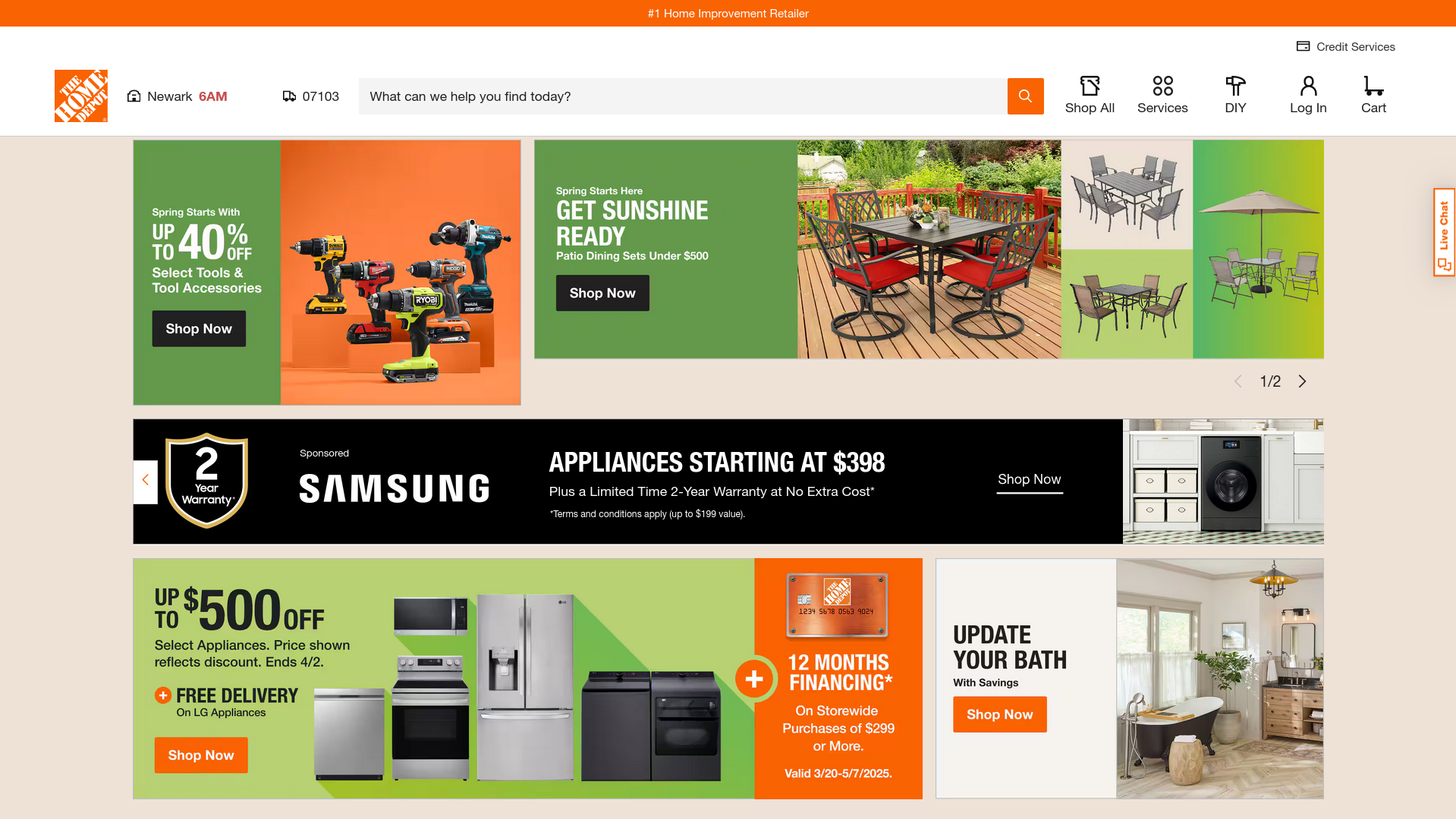

I took the address and port for the first US based proxy, slapped them into my browser and 💥 BOOM 💥, instant access to The Home Depot. Currently browsing timber and nails.

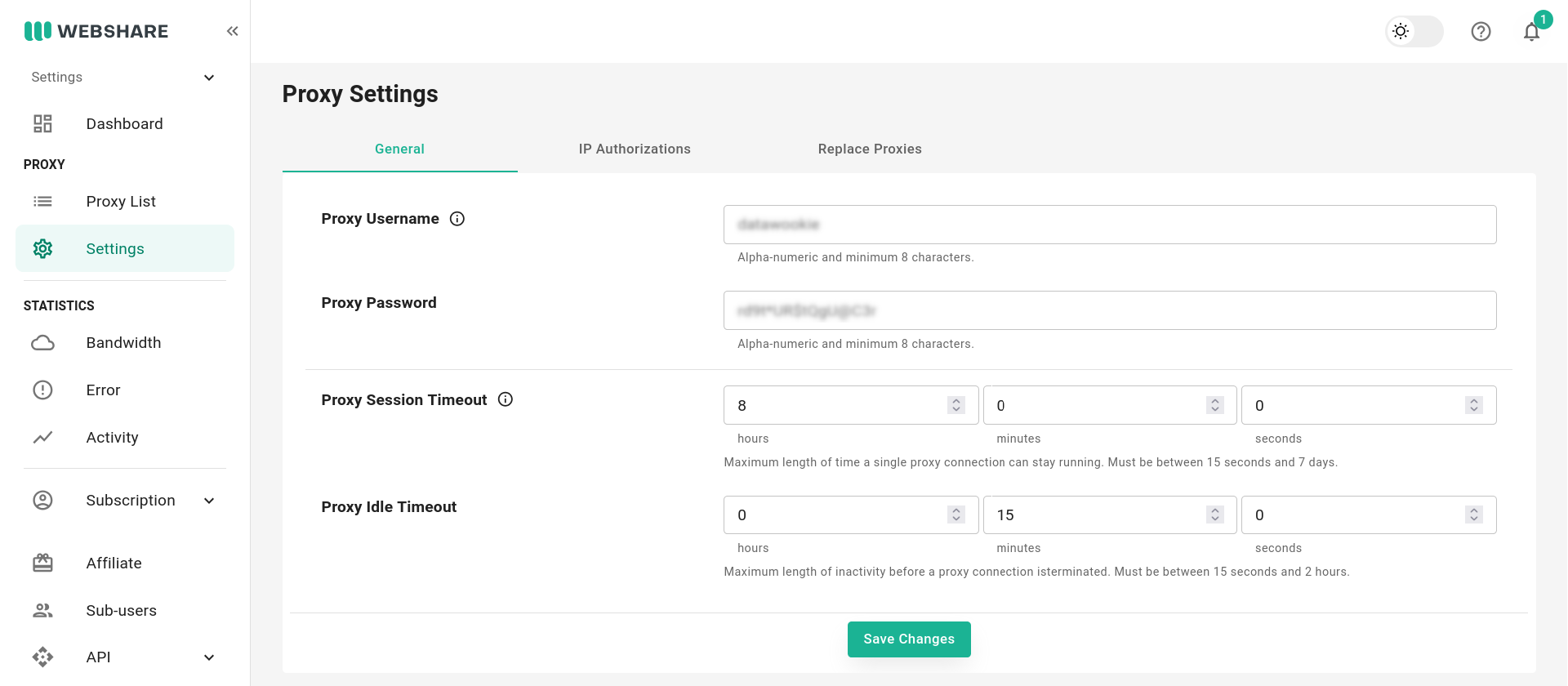

Account Settings

Selecting the Settings tab on Webshare gives access to your account settings.

General

You can set a custom username and password, which will then be applied to all proxies. I replaced the default username and generated a fresh password. You can also configure session and idle timeouts.

Proxy Replacement

This is a premium feature, so I don’t currently have access.

Statistics

The Statistics tab gives access to data on your proxy usage. I created a fresh account, so there’s not much to see here… yet!

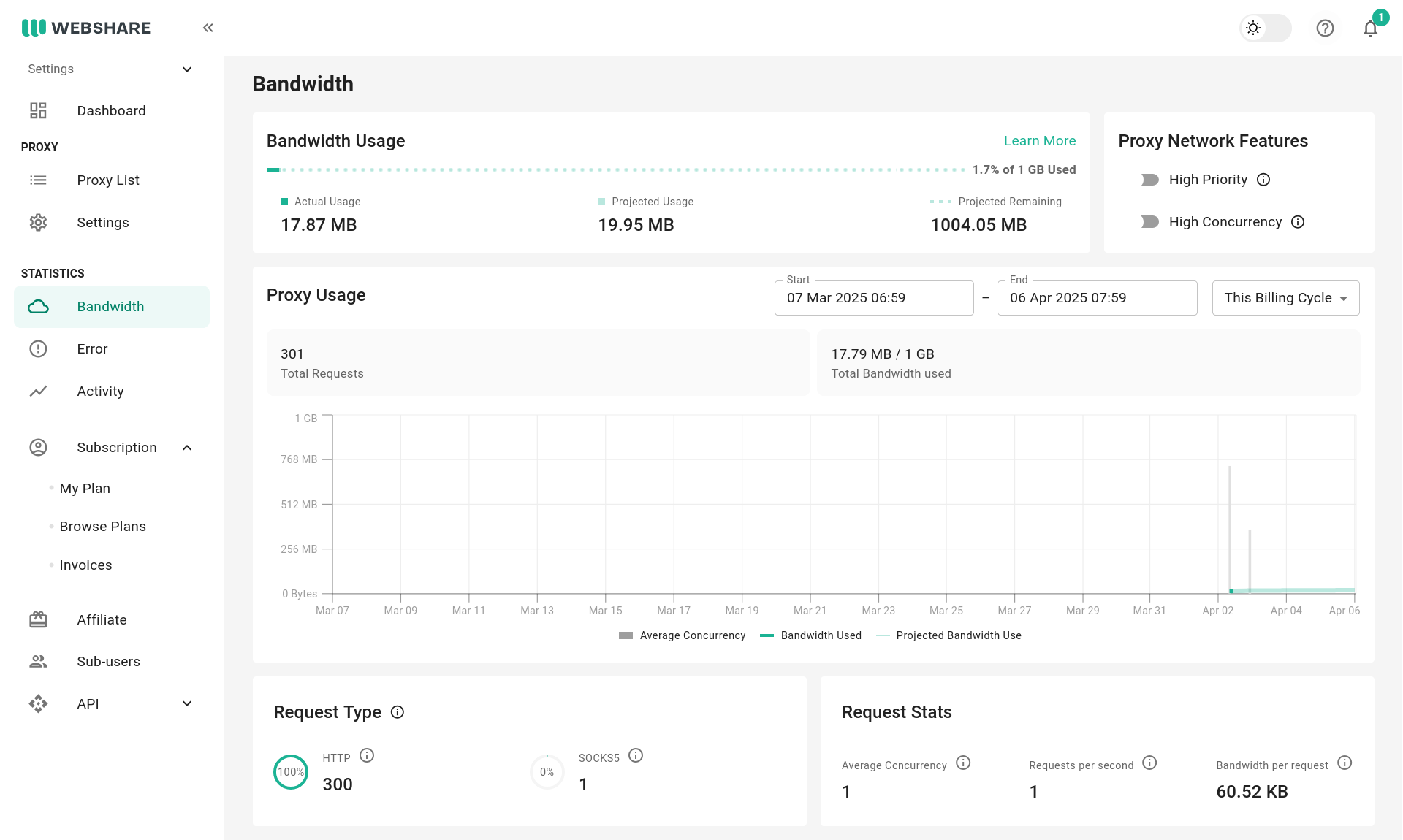

Bandwidth

The number of requests and volume of data can be rather surprising. Most modern web sites unleash a deluge of requests to simply open a single page (HTML, JavaScript, CSS, fonts, AJAX etc.). To reduce the amount of data you’re sloshing back and forth through the proxy you could disable image downloads in your browser. You’ll need to evaluate whether this is worth the effort. If you’re using the proxies for live browsing then it’ll certainly impact your experience.

Errors

Details of proxy failures. No failures for me yet.

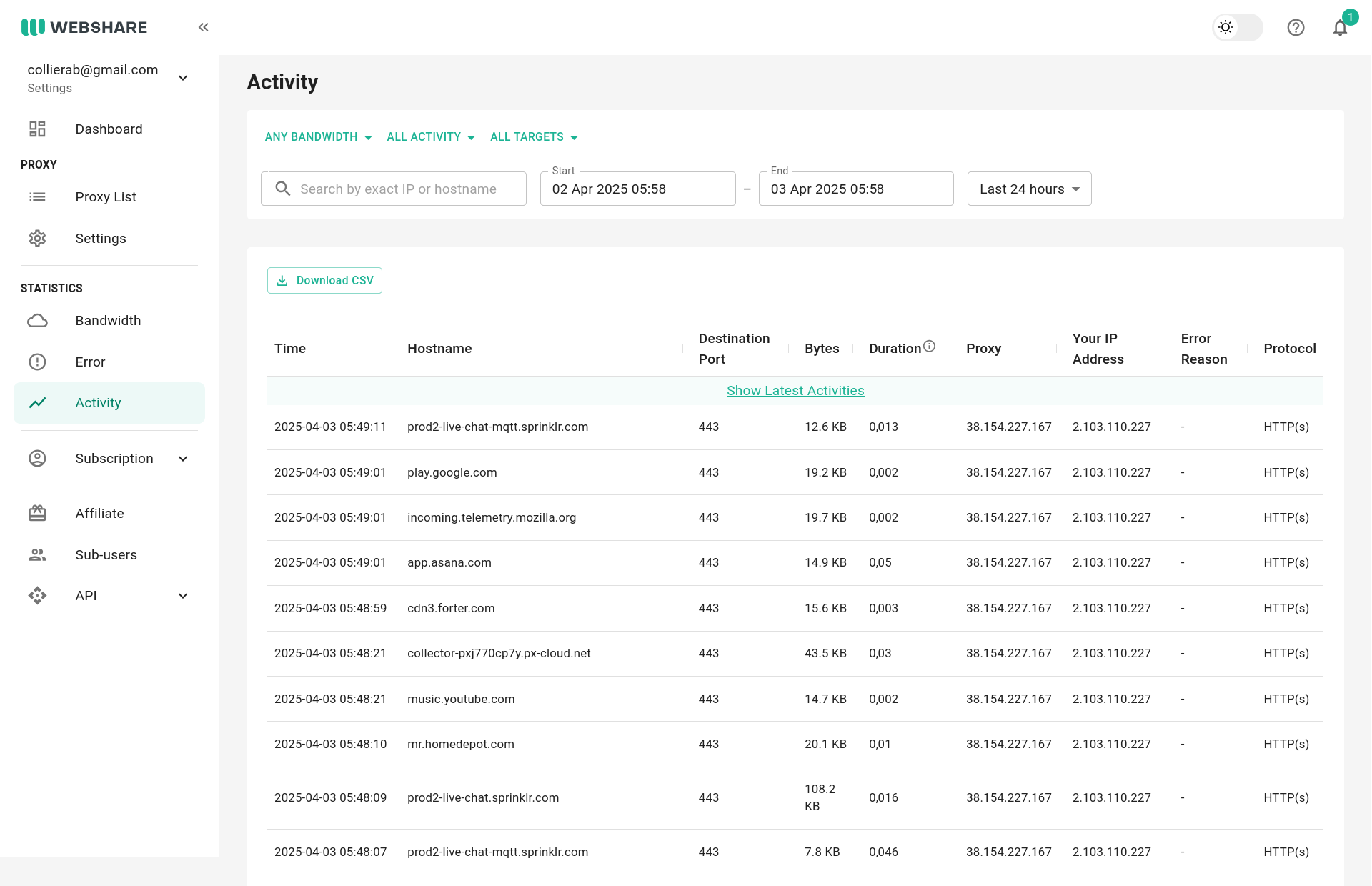

Activity

A detailed breakdown of which sites were accessed and when. This will include the data for all requests, so you’ll see a bunch of URLs that you never explicitly visited.

From the Command Line

We’ll start with a quick test from the command line using curl to request http://httpbin.org/ip, which should return our effective IP address. First without the proxy.

curl -L http://httpbin.org/ip{

"origin": "2.104.105.1"

}Rather than putting the proxy IP and port or authentication credentials in the command itself I’ll source them from environment variables.

echo "$PROXY_IP:$PROXY_PORT"38.153.152.244:9594I’ll also store the username and password in PROXY_USERNAME and PROXY_PASSWORD environment variables. 🚨 Don’t forget to export the variable definitions!

We’ll tie those all together in the http_proxy environment variable, which is respected by curl and a host of other tools like wget, lynx, apt, git and npm.

export http_proxy=http://$PROXY_USERNAME:$PROXY_PASSWORD@$PROXY_IP:$PROXY_PORT/And now make the same curl request via the proxy.

curl -L http://httpbin.org/ip{

"origin": "38.153.152.244"

}From the perspective of HTTPBin the second request originated from the proxy server.

Use with Python

I most frequently use proxies from Python scripts. So let’s take a quick look at how this might work.

import os

import requests

PROXY_IP = os.getenv("PROXY_IP")

PROXY_PORT = os.getenv("PROXY_PORT")

PROXY_USERNAME = os.getenv("PROXY_USERNAME")

PROXY_PASSWORD = os.getenv("PROXY_PASSWORD")

proxies = {"http": f"http://{PROXY_USERNAME}:{PROXY_PASSWORD}@{PROXY_IP}:{PROXY_PORT}"}

response = requests.get("http://httpbin.org/ip") # Direct request.

print(response.text)

response = requests.get("http://httpbin.org/ip", proxies=proxies) # Request via proxy.

print(response.text)I issued two requests. The first directly from my local IP and the second via the proxy.

{

"origin": "2.104.105.1"

}

{

"origin": "38.153.152.244"

}API

Webshare also provides a well documented API. Authentication is via an API key that’s generated from your dashboard. The API has an extensive range of endpoints for interacting with the service. The demo script below barely scratches the surface.

import os

import requests

API_KEY = os.getenv("API_KEY")

API_URL = "https://proxy.webshare.io/api/v2"

HEADERS = {"Authorization": f"Token {API_KEY}"}

# Get profile information.

response = requests.get(f"{API_URL}/profile/", headers=HEADERS)

# Get list of proxies.

response = requests.get(f"{API_URL}/proxy/list/?mode=direct", headers=HEADERS)

proxies = response.json()["results"]

# Get usage statistics.

response = requests.get(f"{API_URL}/stats/aggregate/", headers=HEADERS)Here’s the information returned for the second proxy in the list.

{

"id": "d-16668161941",

"proxy_address": "38.153.152.244",

"port": 9594,

"valid": true,

"last_verification": "2025-04-06T21:41:59.883719-07:00",

"country_code": "US",

"city_name": "Piscataway",

"asn_name": "Server-Mania",

"asn_number": 55286,

"high_country_confidence": true,

"created_at": "2025-02-27T15:50:39.575672-08:00"

}With a list of proxies retrieved via the API it would be simple to set up a local rotating proxy scheme.

In addition to the GET endpoint for listing the available proxies (this is paginated if you have a premium subscription) there’s also a POST endpoint that will refresh the list of proxies.

Conclusion

Proxies are an indispensable tool in a Web Scraper’s arsenal. I’m impressed with Webshare. It’s easy to sign up. The proxies are fast and reliable. The dashboard is informative. And the API is a great feature for using the proxies programmatically.

This post is not sponsored by Webshare, and I have not received any financial incentive or compensation from Webshare for writing it. The opinions expressed here are entirely my own, based on my experience with their proxies.

Trial account associated with collierab@gmail.com. Using Google login.