fast-neural-style: Real-Time Style Transfer

I followed up a reference to fast-neural-style from Twitter and spent a glorious hour experimenting with this code. Very cool stuff indeed. It’s documented in Perceptual Losses for Real-Time Style Transfer and Super-Resolution by Justin Johnson, Alexandre Alahi and Fei-Fei Li.

The basic idea is to use feed-forward convolutional neural networks to generate image transformations. The networks are trained using perceptual loss functions and effectively apply style transfer.

What is “style transfer”? You’ll see in a moment.

As a test image I’ve used my Twitter banner, which I’ve felt for a while was a little bland. It could definitely benefit from additional style.

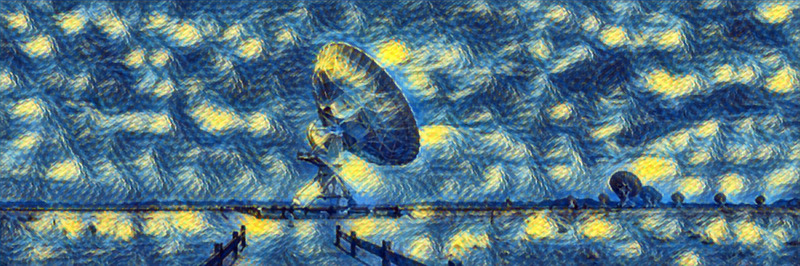

What about applying the style of van Gogh’s The Starry Night?

That’s pretty cool. A little repetitive, perhaps, but that’s probably due to the lack of structure in some areas of the input image.

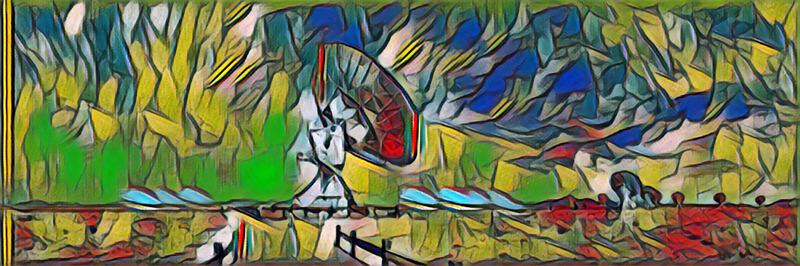

How about the style of Picasso’s La Muse?

Again, rather nice, but a little too repetitive for my liking. I can certainly imagine some input images on which this would work well.

Here’s another take on La Muse but this time using instance normalisation.

Repetition vanished.

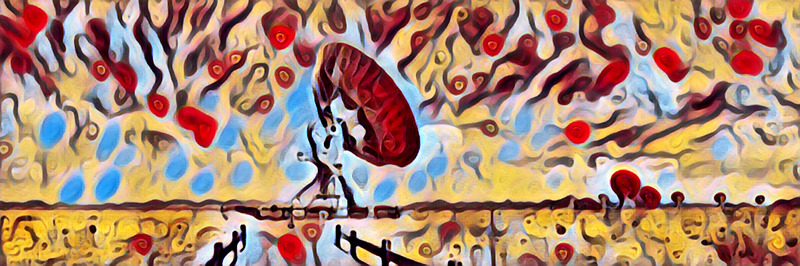

What about using some abstract contemporary art for styling?

That’s rather trippy, but I like it.

Using a mosaic for style creates an interesting effect. You can see how the segments of the mosaic are echoed in the sky.

Finally using Munch’s The Scream. The result is dark and foreboding and I just love it.

Maybe it’s just my hardware, but these transformations were not quite a “real-time” process. Nevertheless, the results were worth the wait. I certainly now have multiple viable options for an updated Twitter header image.