Using Large Maps with OSRM

How to deal with large data sets in OSRM? Some quick notes on processing monster PBF files and getting them ready to serve with OSRM.

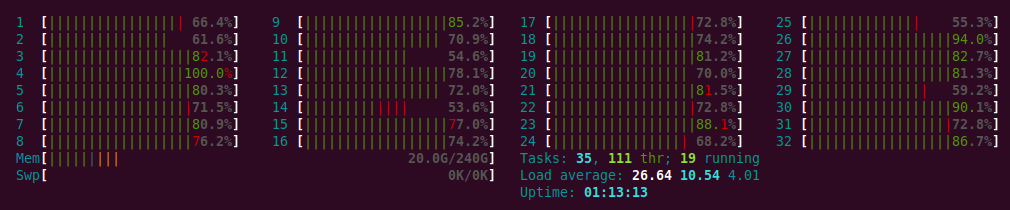

Something to consider up front: if you are RAM limited then this process is going to take a very long time due to swapping. It might make sense to spin up a big cloud instance (like a r4.8xlarge) for a couple of hours. You’ll get the job done much more quickly and it’ll definitely be worth it.

Test Data

To illustrate, let’s download two sets of data which might be considered “large”. These data are both for North America and represent the same information but in different formats.

wget http://download.geofabrik.de/north-america-latest.osm.pbf

wget http://download.geofabrik.de/north-america-latest.osm.bz2The second of these files is a compressed XML file, which is what I have been routinely using before. However, as we will see shortly, the sheer size of this file makes it completely impractical!

When the above downloads complete you’ll find that the PBF file is 8 Gb while the compressed XML file is 13 Gb. I tried decompressing the latter and rapidly ran out of space on my 80 Gb partition. Clearly the file was going to be too big to work with!

STXXL

OSRM uses STXXL to handle external memory for out-of-core calculations. This allows OSRM to efficiently handle calculations which are too large to fit in RAM.

The default STXXL setup will suffice for many purposes. But you might want to tweak it.

echo "disk=/tmp/stxxl,10G,syscall" >.stxxlExtract

How to make use of the PBF file? These files (and the XML files too, actually!) contain a vast array of data, much of which is irrelevant to routing. The osrm-extract tool will extract only the salient data, which turns out to be a relatively small subset of the original.

osrm-extract north-america-latest.osm.pbfAnd, yes, it’s also going to take some time (especially if the job is too big to fit into RAM and it starts swapping). Get busy doing something else! Waiting will be frustrating.

This operation is going to consume memory like a beast! Unless you’re running on a machine with a hefty chunk of RAM you’ll need to ensure that you have plenty of swap space available. If you can, spin up a big machine. It’ll save you a lot of time (and probably work out more economical too). I found that a r3.2xlarge EC2 instance was quite adequate. You’ll need roughly 50 Gb (combination of physical RAM and swap space).

Contract

When osrm-extract is done, the next step is to run osrm-contract on the results.

osrm-contract north-america-latest.osrmAgain this is going chug away for a while. Go for a run. Make dinner. Knit.

On my 4 core EC2 instance that ran overnight. When it was done the processed files totalled 37 Gb. It’s possible that not all of those files are necessary for routing calculations, but I’m going to hang onto all of them for now.

Serve

At this stage you’re ready to fire up the routing server.

osrm-routed north-america-latest.osrmDue to the size of the data it will take a short while for the server to be ready. When you see the following log message it’s ready to accept requests.

[info] Listening on: 0.0.0.0:5000 │ }

[info] running and waiting for requestsCheck that it’s running on the default port.

lsof -i :5000COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

osrm-rout 25517 ubuntu 14u IPv4 421436 0t0 TCP *:5000 (LISTEN)Go head and start submitting requests on port 5000. Your lengthy labours will start paying off.

$ curl -s "http://localhost:5000/nearest/v1/driving/-106.619414,35.084085" | jq

{

"waypoints": [

{

"nodes": [

2540747742,

2540747745

],

"location": [

-106.618295,

35.083693

],

"hint": "DpAthK_QToQAAAAABwAAAAwAAADCAAAAAAAAAAcAAAAMAAAAwgAAAB5CAABJIqX5rVUXAuodpfk1VxcCAQCPAQABEL4=",

"name": "",

"distance": 110.789041

}

],

"code": "Ok"

}