Some things that got my attention this week:

- Titan Image Generator in AWS Bedrock

- AWS Transcribe Supports 100+ Languages

- cron Jobs in Vercel

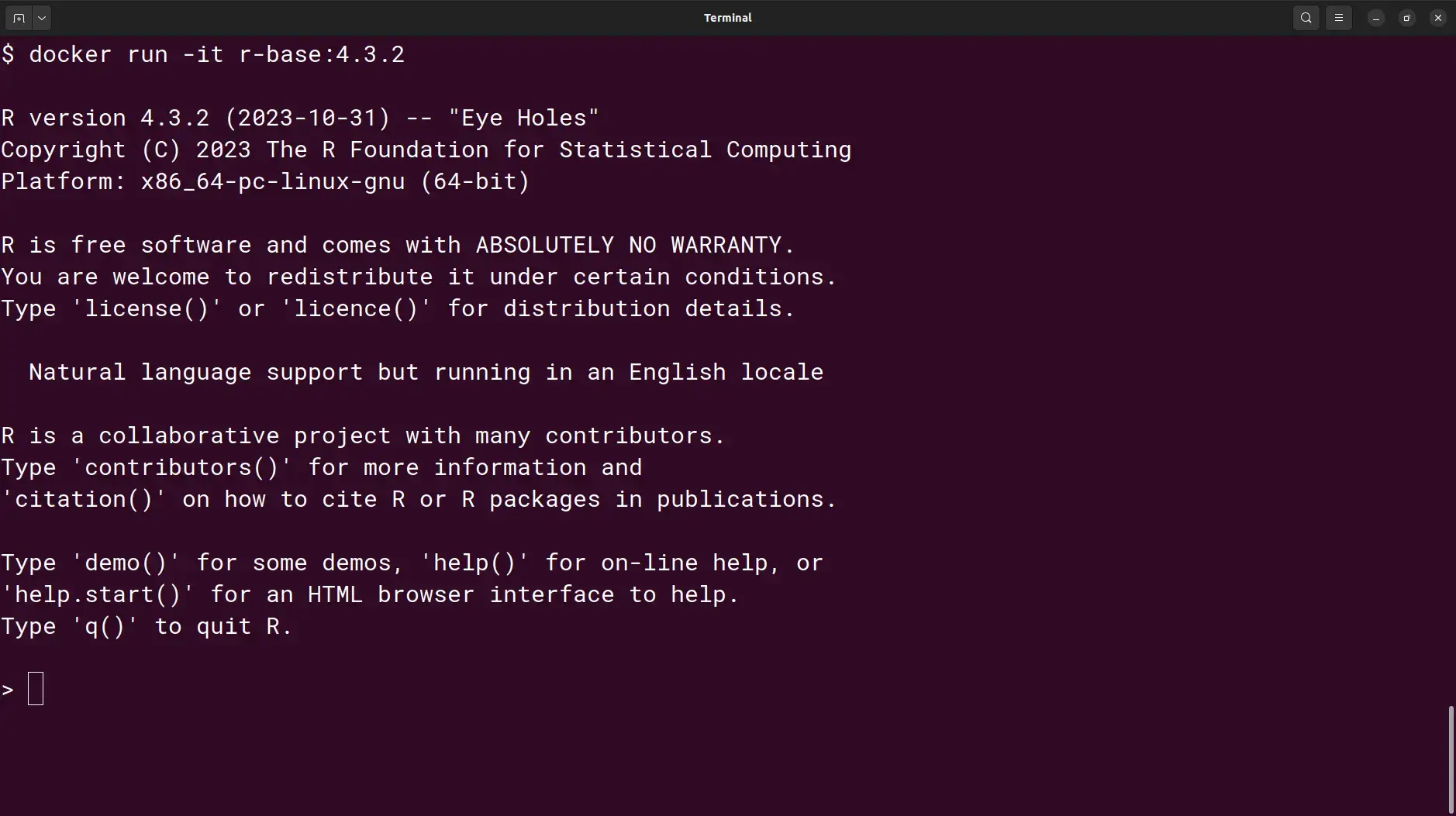

- R 4.3.2

- Spark 3.4.2

- Keras 3.0.0 and

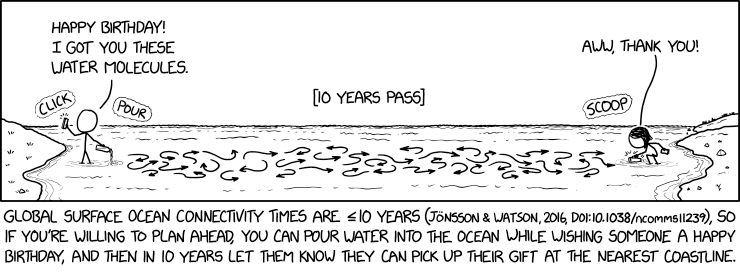

- Oceanography Gift.

Titan Image Generator in AWS Bedrock

— AWS Bedrock now includes the Titan Image Generator, which will rapidly create and refine studio-quality, realistic images from natural language prompts. The Titan Image Generator is not a standalone application, but rather intended for integration into other products via Bedrock.AWS Transcribe Supports 100+ Languages

— Amazon announced that their Transcribe automatic speech recognition service now supports more than 100 languages (see list of supported languages). The new model, trained on millions of hours of unlabeled audio data, also provides substantial improvements in translation accuracy.Cron Jobs in Vercel

— It’s now possible to usecron jobs to automate tasks in Vercel. This feature is available on all plans and will be useful for things like backups and notifications. R 4.3.2

— A new patch version of R (4.3.2, codename Eye Holes) was released on 31 October 2023. It comes with a number of bug fixes and a few new features. Take a look at the release notes to see what’s new. Also, see how this fits into the overall history of the development of R.Get the new release from CRAN or using your package manager. Docker images are also available.

Spark 3.4.2

— Spark 3.4.2 was released on 30 November 2023. This is a stable maintenance release with some dependency updates. See the release notes for details.Keras 3.0.0

— A new major version of Keras (3.0.0) was announced on 28 November 2023. This release is a complete rewrite of the framework and includes a number of major changes. Highlights of the release are listed below.- Support for TensorFlow, JAX and PyTorch backends (cross-framework compatibility; ability to switch between backends).

- Dynamic selection of best backend per model.

- High compatibility with Keras 2 with a detailed migration guide.

- Pretrained models (like BERT, OPT and Whisper) for all backends.

- Stateless API methods suited to functional programming.

- Support for data-parallel and model-parallel distributed training.

Oceanography Gift

— If you’re not entirely sure why this is funny or what it means then take a look at the explanation.